AI-Washing and Moat Myths: Separating Real Defensibility from Hype

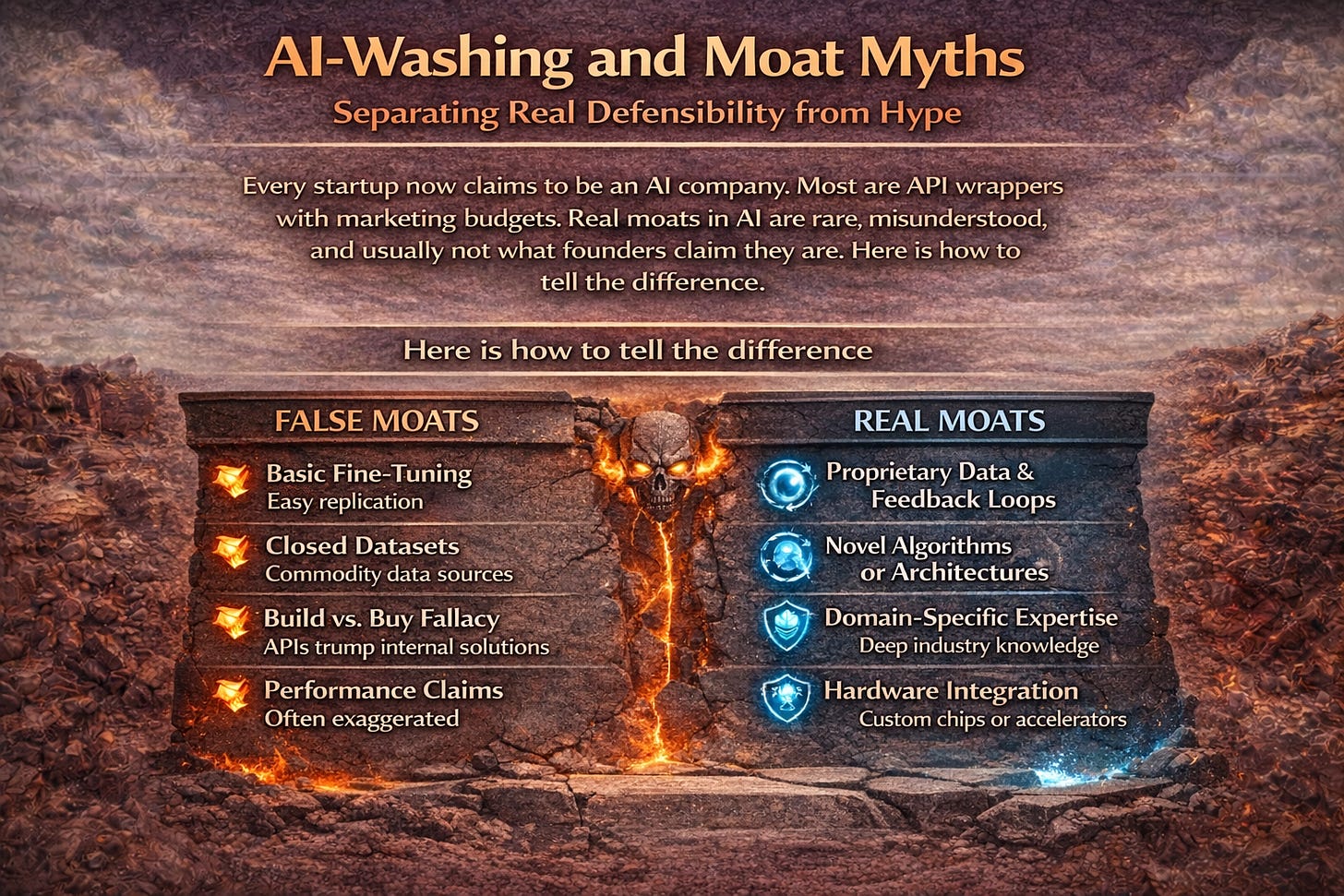

Every startup now claims to be an AI company. Most are API wrappers with marketing budgets. Real moats in AI are rare, misunderstood, and usually not what founders claim they are. Here is how to tell

AI-washing is the new greenwashing. Companies slap AI on everything to boost valuations, attract talent, and impress investors. But most AI companies have no defensible moat. They are wrapping OpenAI APIs with nice interfaces. When GPT-5 arrives with native features, their entire business evaporates overnight.

10 KEY TAKEAWAYS - AI-WASHING AND MOAT REALITY

1. AI-washing inflates valuations artificially: Adding AI to company descriptions commands 42% valuation premiums regardless of actual technology.

2. API wrappers are not AI companies: Calling OpenAI APIs and adding UI does not create defensible technology differentiation.

3. Data moats are overestimated: Most companies claiming data moats have data that is either not unique, not large enough, or not defensible.

4. Model moats are temporary: Foundation model improvements obsolete custom models within 12-18 months.

5. Integration moats are undervalued: Deep workflow integration creates switching costs that survive technology commoditization.

6. Network effects in AI are rare: True data network effects require user-generated data that directly improves the product for others.

7. Compliance moats are durable: HIPAA, SOC 2, and FDA certifications take years to replicate and create real barriers.

8. Distribution moats beat technology moats: Access to customers matters more than marginally better AI in most markets.

9. Due diligence must evolve: VCs need technical depth to distinguish real AI from AI-washing and assess moat durability.

10. The VC Risk Swap rewards real moats: Milestone funding validates defensibility through demonstrated progress rather than claimed differentiation.

📚 READING PREREQUISITES

This post builds on concepts from earlier posts including AI company taxonomy, compute economics, and valuation challenges. Understanding the different types of AI companies and their risk profiles provides essential context for evaluating moat claims.

Recommended Prior Reading:

• Post 2: Pure AI vs. AI-Enabled - The Taxonomy That Determines Fundability

• Post 5: Enterprise vs. Consumer AI - Why B2B Is the Only Sustainable Path

• Post 6: Valuation Paralysis - Why Traditional Frameworks Fail AI Startups

• Series overview available at SaferWealth.com

The AI-Washing Epidemic: When Everyone Claims to Be AI

Walk through any startup pitch competition in 2025 and you will hear AI mentioned in every single company description. Marketing automation platform? Now it is an AI-powered marketing platform. CRM tool? AI-driven customer intelligence. Scheduling app? AI scheduling assistant. Even companies with zero machine learning in their stack claim AI capabilities because the valuation premium is too attractive to ignore.

According to PitchBook data, startups that include AI in their positioning command roughly 42% higher valuations at seed stage compared to similar companies without AI branding. That premium creates irresistible incentive to AI-wash regardless of actual technology.

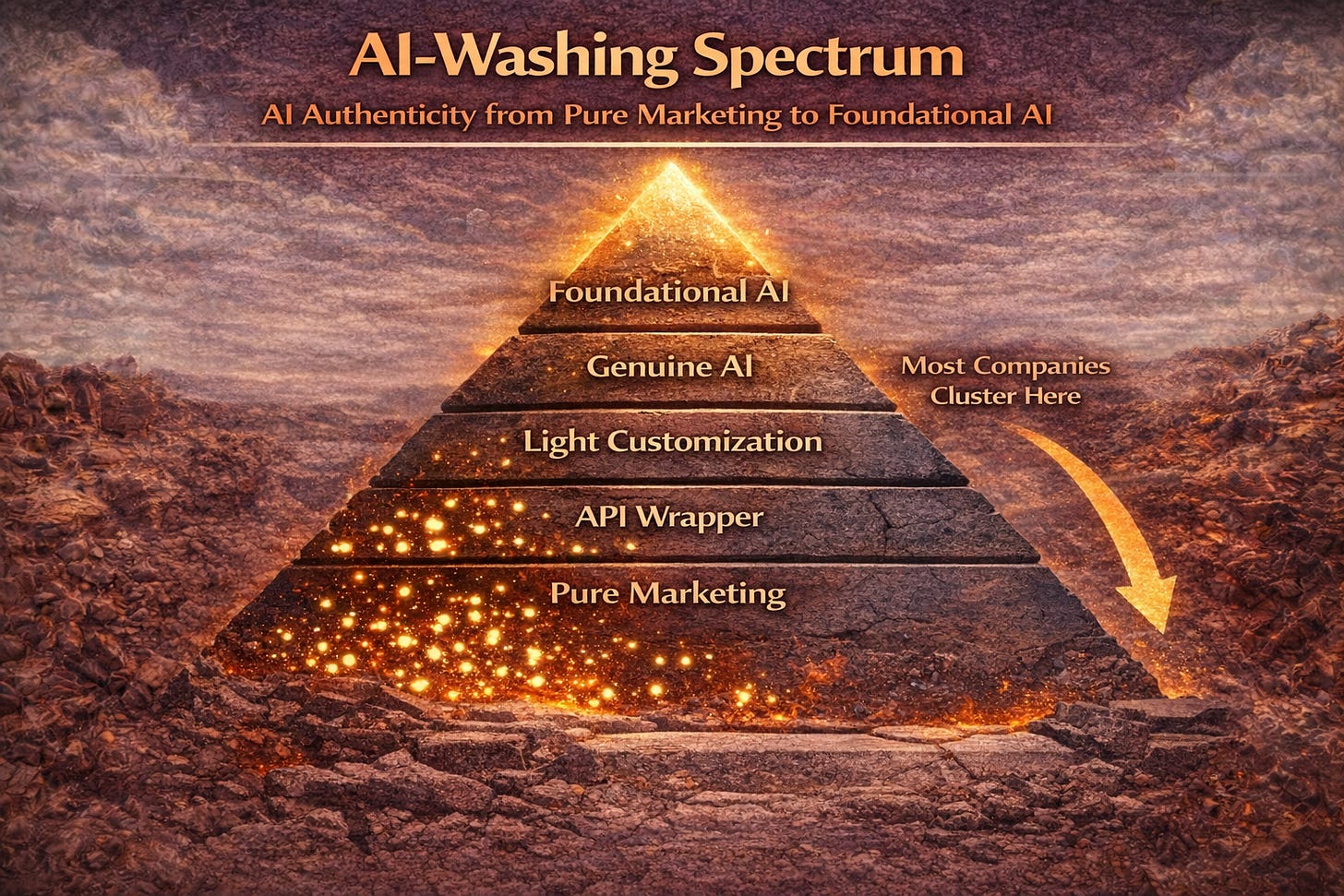

The AI-Washing Spectrum:

• Pure marketing: No AI anywhere, just added to pitch deck because it sounds good

• API wrapper: Calls OpenAI or Anthropic APIs, adds a nice interface, claims AI company status

• Light customization: Fine-tuned models or custom prompts on top of foundation models

• Genuine AI: Proprietary models, unique training data, real technical differentiation

• Foundational AI: Building new model architectures and advancing the field

Most AI companies claiming venture-scale valuations are somewhere between API wrapper and light customization. That is not inherently bad, but it is not defensible, and pretending otherwise destroys value when reality emerges.

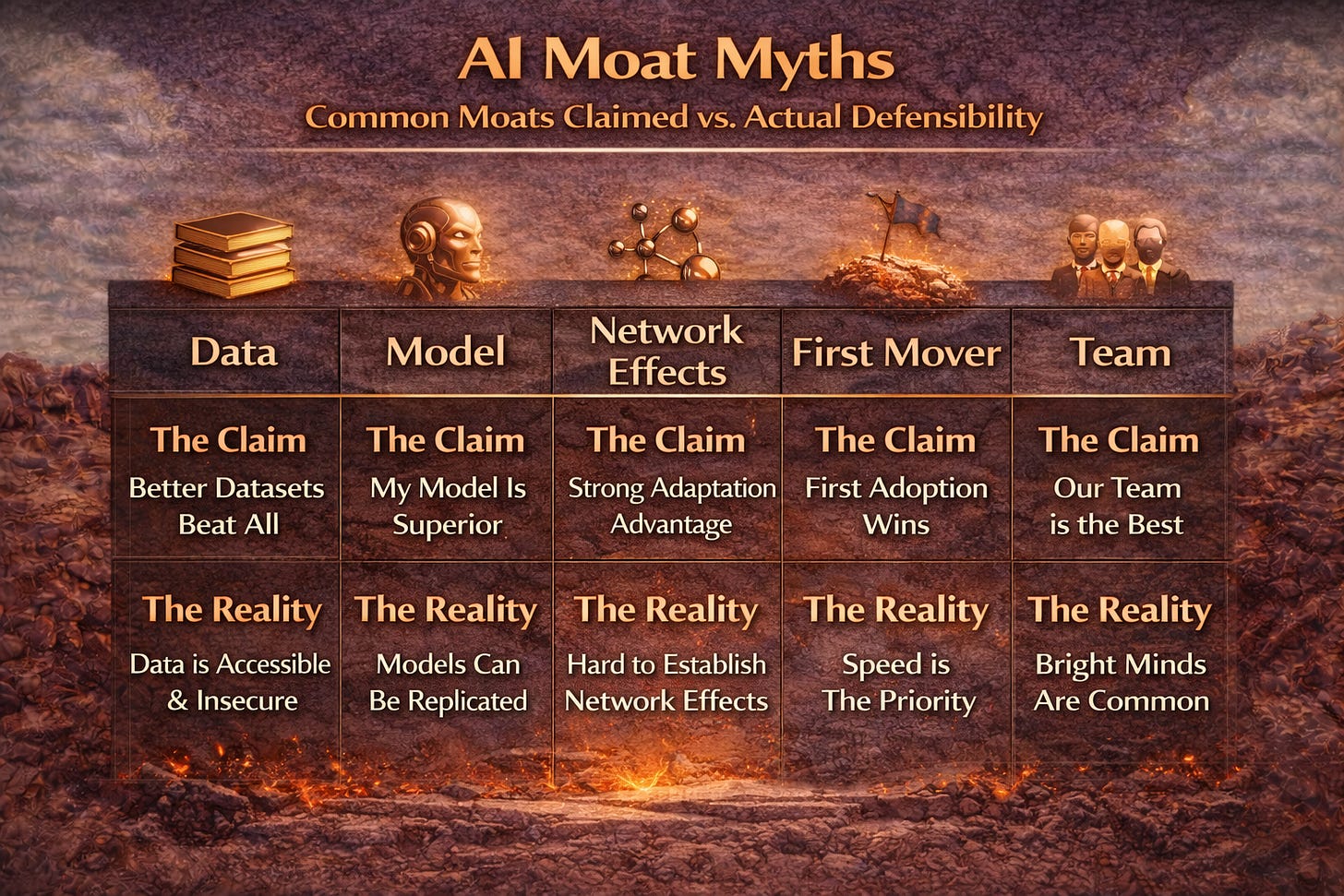

The Five Moat Myths Every AI Startup Claims

Beyond AI-washing, there is a related problem: moat mythology. Founders claim defensibility they do not actually have. Here are the five most common moat myths:

Myth 1: We Have a Data Moat

The claim: We have accumulated proprietary data that makes our models better than anyone else’s.

The reality: Most data moat claims fail three tests. First, is the data actually unique? If competitors can acquire similar data through partnerships, scraping, or purchases, it is not a moat. Second, is the data large enough to matter? Foundation models trained on internet-scale data often outperform specialized models trained on smaller proprietary datasets. Third, is the data legally defensible? Many companies discover their data collection has regulatory or IP issues when they try to scale.

Myth 2: Our Model Is Better

The claim: We have built a proprietary model that outperforms alternatives on key benchmarks.

The reality: Model advantages are temporary. GPT-4 obsoleted countless specialized models overnight. Claude and Gemini continue the pattern. Your better model today is table stakes in 18 months. Foundation model improvements are relentless and funded by billions of dollars you cannot match.

Myth 3: We Have Network Effects

The claim: More users generate more data, which improves our models, which attracts more users.

The reality: True network effects in AI are rare. Most AI products do not actually improve meaningfully from marginal user data. The feedback loop sounds compelling but rarely creates exponential defensibility. Ask: Does the 1000th user’s data make the product noticeably better for the 1001st user? Usually not.

Myth 4: First Mover Advantage

The claim: We were first to market, so we have established brand and customer relationships.

The reality: First mover advantage is mostly myth in AI. The technology evolves so fast that being first often means building on inferior foundations. Second movers can learn from your mistakes and build on better infrastructure. ChatGPT was not first to market with chatbots. It was best to market with a specific approach at a specific moment.

Myth 5: Our Team Is the Moat

The claim: We have assembled world-class AI talent that competitors cannot replicate.

The reality: As we covered in Post 4, team quality is double-edged. Premium talent attracts hyperscaler poaching. Your team moat can walk out the door and join Google tomorrow. Teams are necessary but not sufficient for defensibility.

What Actually Creates Defensibility in AI

If the common moat claims are mostly mythology, what actually creates defensibility in AI companies? Here are the real moats:

Real Moat 1: Deep Integration Complexity

When your product is deeply integrated into customer workflows, switching costs become prohibitive. This is not about calling APIs. It is about being embedded in Salesforce workflows, Epic clinical systems, or SAP financial processes. Replacing you requires change management, retraining, data migration, and months of implementation work. That friction survives technology commoditization.

Real Moat 2: Regulatory Certification

HIPAA compliance, SOC 2 certification, FDA clearance, FedRAMP authorization. These take 12-36 months to achieve and cannot be shortcut. Competitors must invest the same time and resources. Compliance moats are boring but durable.

Real Moat 3: Distribution Advantage

Access to customers beats better technology in most markets. If you have exclusive partnerships, embedded distribution channels, or customer acquisition advantages, those matter more than marginally better AI. A good enough AI with great distribution beats a great AI with no distribution every time.

Real Moat 4: Proprietary Feedback Loops

Not just data, but active feedback loops where product usage generates signals that continuously improve the product in ways competitors cannot replicate. This requires careful system design, not just data accumulation. The improvement must be visible to users and compound over time.

Real Moat 5: Domain Expertise Accumulation

Deep understanding of specific industries that is encoded into product design, training approaches, and customer success processes. This is not just having smart people. It is having institutional knowledge that takes years to develop and is embedded in systems rather than individuals.

The key difference: Real moats are built through sustained execution over time. They cannot be claimed on day one. Fake moats are asserted immediately and evaporate on contact with competition or technology evolution.

How to Spot AI-Washing in Due Diligence

Whether you are an investor evaluating deals or a founder trying to differentiate from AI-washers, here is how to assess authenticity:

Technical Depth Questions:

• What happens if OpenAI or Anthropic raises API prices 10x? If the answer is we would be out of business, it is an API wrapper.

• What percentage of your ML team is doing training versus prompt engineering? More prompt engineering suggests light customization.

• What is your inference cost per query? Companies with real AI understand their unit economics. API wrappers often do not.

• Show me your model architecture. If they cannot explain it technically, they probably do not have proprietary technology.

Moat Validation Questions:

• If GPT-5 launches with native capability for your use case, what happens? Honest answer: We would need to differentiate on something else.

• What would a well-funded competitor need to replicate your technology? If the answer is six months and $2M, you do not have a moat.

• How long would it take a customer to switch to a competitor? If the answer is one weekend, you do not have integration depth.

• What data do you have that no one else can get? Be specific. If the answer is vague, the moat is probably vague too.

The best founders are honest about their moat limitations. They say things like: Our current moat is execution speed. We need to build integration depth before commoditization catches us. That honesty signals sophistication and realistic planning.

The VC Risk Swap: Rewarding Real Defensibility

AI-washing thrives because traditional equity structures reward claims over proof. If you can convince investors you have a moat, you get the valuation premium regardless of whether the moat actually exists. The VC Risk Swap creates different incentives by validating defensibility through demonstrated progress rather than claimed differentiation.

How the VC Risk Swap Addresses AI-Washing:

Milestone-based validation: Instead of pricing rounds based on moat claims, the VC Risk Swap releases capital as milestones demonstrate actual defensibility. First enterprise contract with meaningful switching costs triggers additional funding. Regulatory certification unlocks next tranche. Moats are proven, not asserted.

Revenue share reflects real traction: API wrappers with no moat struggle to retain customers and generate sustainable revenue. The revenue share component of the VC Risk Swap rewards companies that actually retain customers because they have real switching costs. Companies with fake moats show customer churn that reduces funder returns, creating natural selection pressure.

Insurance hedges moat failure: If claimed moats evaporate on contact with GPT-5, traditional equity investors lose everything. The insurance component of the VC Risk Swap provides downside protection independent of whether moat claims prove accurate. Funders can back companies with uncertain defensibility because the structure hedges moat risk directly.

Five-year horizon tests durability: Fake moats collapse within 12-18 months. Real moats strengthen over time. The five-year structure of the VC Risk Swap creates enough duration to distinguish sustainable defensibility from temporary advantage. Companies that maintain customer retention and revenue growth through multiple foundation model generations have proven something real.

Why This Matters:

• Aligns incentives with truth: Companies benefit from building real moats rather than claiming fake ones

• Protects funders from AI-washing: Milestone validation catches fake moats before full capital deployment

• Rewards execution over storytelling: Companies that build integration depth and compliance moats get funded for doing so

• Creates time for moat building: Five-year horizon allows companies to develop durable defensibility

• Hedges uncertainty: Insurance protects against moat claims that prove incorrect

[IMAGE SUGGESTION: Flow diagram showing Traditional Equity (Claims -> Valuation -> Investment -> Reality Check -> Loss) versus VC Risk Swap (Claims -> Milestone Validation -> Proven -> Investment Continues). Alt text: Visual comparison of how traditional equity rewards claims while VC Risk Swap rewards proven defensibility]

What This Means for Founders and Investors

For Founders:

• Be honest about where you are on the AI authenticity spectrum. Sophisticated investors can tell the difference.

• If you are an API wrapper, own it. Build integration depth and compliance moats rather than pretending you have technology moats.

• Moats take time to build. Have a realistic timeline for developing real defensibility and communicate that plan clearly.

• Consider funding structures that reward moat building rather than moat claiming.

For Investors:

• Develop technical due diligence capability. You cannot spot AI-washing without technical depth.

• Ask the hard moat validation questions. Founders who cannot answer them honestly are either confused or deceptive.

• Price in moat uncertainty. If you cannot validate the moat, do not pay the premium.

• Consider structures like the VC Risk Swap that validate defensibility through milestones rather than claims.

The AI-washing epidemic is a symptom of misaligned incentives in traditional equity financing. Structures that reward demonstrated defensibility over claimed defensibility will separate real AI companies from pretenders.

💡 KEY TAKEAWAYS

Remember These Core Principles:

• AI-washing commands premiums but creates risk: 42% valuation boost for AI branding incentivizes false claims

• Most claimed moats are mythology: Data, model, network effect, first mover, and team moats rarely survive scrutiny

• Real moats require sustained execution: Integration depth, compliance, distribution, and domain expertise take time

• The VC Risk Swap rewards real defensibility: Milestone validation catches fake moats before full investment

• Honesty beats hype: Sophisticated investors and sustainable businesses both benefit from moat realism

❓ FREQUENTLY ASKED QUESTIONS

Q: What is AI-washing and why does it matter?

A: AI-washing is adding AI to company positioning regardless of actual technology, similar to greenwashing. It matters because AI-branded companies command roughly 42% higher seed valuations. This creates incentive to claim AI capabilities that do not exist, leading to mispricing, disappointed investors, and value destruction when reality emerges.

Q: How can you tell if an AI company is really an API wrapper?

A: Ask what happens if OpenAI raises prices 10x. Ask what percentage of the ML team does training versus prompt engineering. Ask about inference cost per query. Request model architecture explanation. API wrappers cannot answer these questions satisfactorily because their core technology is rented from foundation model providers rather than built internally.

Q: Why do most data moat claims fail?

A: Most data moat claims fail three tests: uniqueness (can competitors acquire similar data?), scale (is it large enough to beat foundation models?), and defensibility (are there regulatory or IP issues?). Companies often claim data moats based on modest data accumulation that is neither unique nor large enough to create sustainable advantage against well-funded competitors.

Q: What creates real defensibility in AI companies?

A: Real moats include deep integration complexity that creates switching costs, regulatory certifications like HIPAA and SOC 2 that take years to replicate, distribution advantages that provide customer access, proprietary feedback loops that continuously improve products, and accumulated domain expertise embedded in systems rather than individuals. These require sustained execution over time.

Q: How does the VC Risk Swap address AI-washing problems?

A: The VC Risk Swap validates defensibility through demonstrated milestones rather than claimed moats. Capital releases as integration depth, regulatory certification, and customer retention prove real switching costs. Revenue share rewards actual traction rather than projected metrics. Insurance hedges moat failure if claims prove incorrect. The five-year structure creates duration to distinguish temporary advantage from sustainable defensibility.

🎯 READY TO BUILD REAL DEFENSIBILITY?

Understanding the difference between claimed and real moats is essential for AI startup success. The VC Risk Swap rewards companies that build genuine defensibility through sustained execution.

Subscribe to SaferWealth for insights on alternative startup funding structures, AI commercialization strategies, and the VC Risk Swap framework. Join founders and funders who are building better capital structures for the AI era.

Have questions about your specific situation? Drop a comment below or reach out directly. I respond to every message.

📖 RELATED READING

Continue Your Learning:

• NFX - Network Effects Bible: Comprehensive guide to understanding and building network effects in startups.

• Sequoia - Defensibility Framework: How to evaluate competitive moats and sustainable advantages.

• a16z - AI Canon: Curated resources for understanding AI technology and business models.

CONNECT WITH SAFERWEALTH

Expand Your Learning Beyond This Post:

1. Web: SaferWealth.com - Alternative Startup Funding Structures

2. YouTube: TheCapitalToolkit - VC Risk Swap Educational Content

3. LinkedIn: LinkedIn @SaferWealth - Startup Finance Innovation

4. Rumble: @saferwealth - Educational video content on alternative funding

5. Instagram: @saferwealth - Quick insights and updates

👤 ABOUT THE AUTHOR

Sean Cavanagh, BAS, CPA, CA, CF, CBV

With over three decades in business valuations, M&A advisory, and tax structuring, Sean delivers unvarnished truth about startup funding challenges. Starting at Deloitte and Canada Revenue Agency, he now advises founders and funders on alternative capital structures through SaferWealth.com. The VC Risk Swap framework reflects his frustration with funding structures that consistently fail AI startups.

Connect with Sean:

• 🌐 SaferWealth.com

📚 DO YOUR OWN RESEARCH

The concepts discussed in this article are grounded in industry data and market analysis. Below are authoritative sources for readers who want to dive deeper:

Moat & Defensibility Resources:

• Investopedia - Economic Moat

• Investopedia - Network Effects

• Investopedia - Switching Costs

AI Company & Foundation Model Resources:

• OpenAI - GPT-4 Documentation

Compliance & Regulatory:

This section empowers readers to verify information, explore topics deeper, and develop their own informed perspectives on AI defensibility and moat analysis.

⚖️ EDUCATIONAL DISCLAIMER

This guide provides information only, not professional advice. Consult qualified advisors for your specific situation. All cases are fictional, created for educational purposes from collective industry experience. Neither the author nor SaferWealth accepts liability for actions based on this content. This material supplements but never replaces proper professional consultation and judgment.

SaferWealth is an educational platform dedicated to helping founders and funders understand alternative capital structures for AI startups.

© 2026 SaferWealth. All rights reserved.